Years ago, I watched an episode of the PBS-TV Frontline documentary programme, called Death of a Porn Queen.

It profiled Colleen Applegate, a small-town Minnesota teenager who, running away to Los Angeles in 1982, soon after became the porn actress known as “Callie Aimes” and then more famously as “Shauna Grant.”

Blonde, rosy-cheeked and meaty, she was the fantasy girl for every dirty-old-man-next-door. She made 30 features and did numerous shorts and photo-shoots over just a year in the business.

But in March, 1984, Colleen put a rifle to her temple, and pulled the trigger. She didn’t die right away, but hung on in intensive care, long enough for her family to deplane from the mid-west and unplug their daughter from life-support.

It is entirely obvious to blame porn for Colleen Applegate’s suicide. Colleen was a troubled young woman before she got into the business, though: her original flight to California was fuelled by rumours of a previous suicide attempt in her home town.

She also hadn’t actually made a porn movie in the year before her death (though she was about to re-enter porn following the incarceration of her boyfriend, a drug-dealer who reportedly also had severed their relationship shortly before she shot herself).

Colleen’s depression may well have been exacerbated by the accumulated shame at having exposed herself sexually for the voyeuristic interests of many others. Other porno actresses have committed suicide; but many others have come and gone from the business without succumbing to such a fate.

The question remains as to why so many attractive young women such as Colleen Applegate, in the first place chose to expose themselves in such a fashion, especially during the “golden age” of Californian porn during the late 1970s and early ‘80s.

Feminists would answer that they are coerced into doing this, either specifically by abusive boyfriends or by the “patriarchy” in general.

They cite the case of Linda Boreman, who as “Linda Lovelace”, starred in the first pornographic movie to receive general release in cinemas, Deep Throat, from 1972. Boreman alleged that she only appeared in that film because of the violence, or threats thereof, perpetrated by her boyfriend.

But try as they might, the pro-censorship feminists have never been able to establish that porn actresses generally have faced such pressures to perform sexually in front of a camera, and I’ve never particularly understood the argument that “patriarchy” is responsible for getting young women involved in porn.

When Western society was most truly patriarchal, great restrictions are placed upon women’s sexuality. This was the case, for example, during the Victorian era, and the fact of near-absolute male dominance coinciding with stringent limitations upon female sexuality, is practically universal.

Yet, according to anti-porn feminists, modern “patriarchy” somehow demands that young women expose themselves sexually for the gratification of others.

In fact, the female demographic cohort to which Colleen Applegate (born 1963) belonged, was the first for which there was an unquestioned expectation of independent living well into adulthood, and for whom there was a presumption in turn (and however reluctantly arrived at by the older generation), that chastity wouldn’t be observed until marriage.

Or at least, this held for the middle-class family to which Colleen belonged. In any case, the insertion of such a large number of young women into the workforce, coincided with recession in the long boom that was touched off in the United States with the entry of the country into World War in 1941.

Inflation was unleashed as wage-rises outstripped gains in productivity, interest rates reached double digits, the price of basic commodities (principally but not exclusively crude oil) increased steadily, and the “Keynesian” methods of pump-priming through deficit spending, proved ineffective.

Throughout the 1970s, all Western economies faced desultory economic growth, while at the same time the cost of living continued to increase, sometimes by double-digits per year.

The sheer number of young people, born in the 1950s and ‘60s when fertility rates remained high, entering the workforce during this period guaranteed that a large proportion of them remained unemployed, and many others would be stuck in low-paid, service-sector jobs (as foreign competition had also battered the American industrial sector).

Young women in the workforce especially, faced discrimination and relegation to the “pink-collar ghetto”, low-wage clerical and service work from which there was little chance of improvement or promotion.

Most workplaces, whether in the private or (increasingly larger) state sector, were managed by men who, coming from an earlier generation, would find it perfectly natural to refer to female subordinates, even middle-aged women, as “girls.”

Some of these at least, behaved as a matter of course as though their younger female employees were happy to be harried, groped, even forced into sex.

In these circumstances, working as a sexual exhibitionist may not, at first glance, have seemed all so bad. On the other hand, for Colleen and others like her to have become involved in pornography in the first place, would also involve overcoming a general revulsion and shame about exposing oneself to others, in the act of having sex.

It is considered such a private act, that the words “bed” and “bedroom” are widely understood euphemisms for the sex act. But it wasn’t always thus. Turning to social-historian Bill Bryson, we find that just a few centuries ago, people tended to be less modest than they were subsequently.

The equation of sex as something dirty, was a byproduct of bourgeois culture. Porn, as I said, gained semi-legitimacy in the wake of the counterculture, which rejected middle-class values such as the “hangups” inherent in sex.

The economic and social forces that propelled Colleen Applegate and many other pretty young American women into pornography during the “golden-era” of the business in the late 1970s, are also behind the sudden appearance of female youth from the former Soviet bloc, in the adult-video business since the turn of the century.

During the Cold War, a joke was told that went something like this: “What do you call a pretty girl in Warsaw [or Prague, Budapest, Moscow or any other eastern-European capital]?” The answer: “A tourist.”

In the West back then, people were so naive so as to construe the homeliness of Slavic women as a congenital quality, not a byproduct of the malnutrition and general scarcity of the Communist system.

It was only a few years after the fall of command socialism, that Westerners understood that female Slavs (now well-fed and infused with hope) were more beautiful, very often, than the women of the liberal democracies.

Russians, Czechs, Poles and so on, very often embody that traditional fair-haired and blue-eyed look of the Nordic ideal. After centuries of invasion from the far east, many also have an Asiatic physiognomy that many Occidental men find so alluring.

While modern Slavic women have beauty, what they don't have is very much selling power in the labour marketplace. In this, they are in a position similar to young North American women of thirty and more years ago. In order to thrive, it seems, not very many, but enough of these young women from eastern Europe have opted to sell their bodies, virtually.

|

| Collen Applegate as Shauna Grant, 1963-1984 |

It profiled Colleen Applegate, a small-town Minnesota teenager who, running away to Los Angeles in 1982, soon after became the porn actress known as “Callie Aimes” and then more famously as “Shauna Grant.”

Blonde, rosy-cheeked and meaty, she was the fantasy girl for every dirty-old-man-next-door. She made 30 features and did numerous shorts and photo-shoots over just a year in the business.

But in March, 1984, Colleen put a rifle to her temple, and pulled the trigger. She didn’t die right away, but hung on in intensive care, long enough for her family to deplane from the mid-west and unplug their daughter from life-support.

|

| Colleen's grave, Farmington, Minnesota. findagrave.com |

It is entirely obvious to blame porn for Colleen Applegate’s suicide. Colleen was a troubled young woman before she got into the business, though: her original flight to California was fuelled by rumours of a previous suicide attempt in her home town.

She also hadn’t actually made a porn movie in the year before her death (though she was about to re-enter porn following the incarceration of her boyfriend, a drug-dealer who reportedly also had severed their relationship shortly before she shot herself).

Colleen’s depression may well have been exacerbated by the accumulated shame at having exposed herself sexually for the voyeuristic interests of many others. Other porno actresses have committed suicide; but many others have come and gone from the business without succumbing to such a fate.

The question remains as to why so many attractive young women such as Colleen Applegate, in the first place chose to expose themselves in such a fashion, especially during the “golden age” of Californian porn during the late 1970s and early ‘80s.

Feminists would answer that they are coerced into doing this, either specifically by abusive boyfriends or by the “patriarchy” in general.

They cite the case of Linda Boreman, who as “Linda Lovelace”, starred in the first pornographic movie to receive general release in cinemas, Deep Throat, from 1972. Boreman alleged that she only appeared in that film because of the violence, or threats thereof, perpetrated by her boyfriend.

But try as they might, the pro-censorship feminists have never been able to establish that porn actresses generally have faced such pressures to perform sexually in front of a camera, and I’ve never particularly understood the argument that “patriarchy” is responsible for getting young women involved in porn.

When Western society was most truly patriarchal, great restrictions are placed upon women’s sexuality. This was the case, for example, during the Victorian era, and the fact of near-absolute male dominance coinciding with stringent limitations upon female sexuality, is practically universal.

Yet, according to anti-porn feminists, modern “patriarchy” somehow demands that young women expose themselves sexually for the gratification of others.

In fact, the female demographic cohort to which Colleen Applegate (born 1963) belonged, was the first for which there was an unquestioned expectation of independent living well into adulthood, and for whom there was a presumption in turn (and however reluctantly arrived at by the older generation), that chastity wouldn’t be observed until marriage.

Or at least, this held for the middle-class family to which Colleen belonged. In any case, the insertion of such a large number of young women into the workforce, coincided with recession in the long boom that was touched off in the United States with the entry of the country into World War in 1941.

|

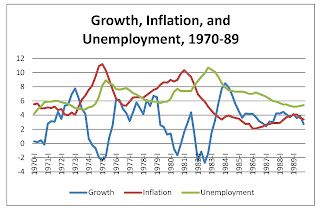

| and people think we have it bad now... www.reed.edu |

Inflation was unleashed as wage-rises outstripped gains in productivity, interest rates reached double digits, the price of basic commodities (principally but not exclusively crude oil) increased steadily, and the “Keynesian” methods of pump-priming through deficit spending, proved ineffective.

Throughout the 1970s, all Western economies faced desultory economic growth, while at the same time the cost of living continued to increase, sometimes by double-digits per year.

The sheer number of young people, born in the 1950s and ‘60s when fertility rates remained high, entering the workforce during this period guaranteed that a large proportion of them remained unemployed, and many others would be stuck in low-paid, service-sector jobs (as foreign competition had also battered the American industrial sector).

Young women in the workforce especially, faced discrimination and relegation to the “pink-collar ghetto”, low-wage clerical and service work from which there was little chance of improvement or promotion.

Most workplaces, whether in the private or (increasingly larger) state sector, were managed by men who, coming from an earlier generation, would find it perfectly natural to refer to female subordinates, even middle-aged women, as “girls.”

Some of these at least, behaved as a matter of course as though their younger female employees were happy to be harried, groped, even forced into sex.

In these circumstances, working as a sexual exhibitionist may not, at first glance, have seemed all so bad. On the other hand, for Colleen and others like her to have become involved in pornography in the first place, would also involve overcoming a general revulsion and shame about exposing oneself to others, in the act of having sex.

It is considered such a private act, that the words “bed” and “bedroom” are widely understood euphemisms for the sex act. But it wasn’t always thus. Turning to social-historian Bill Bryson, we find that just a few centuries ago, people tended to be less modest than they were subsequently.

The equation of sex as something dirty, was a byproduct of bourgeois culture. Porn, as I said, gained semi-legitimacy in the wake of the counterculture, which rejected middle-class values such as the “hangups” inherent in sex.

The economic and social forces that propelled Colleen Applegate and many other pretty young American women into pornography during the “golden-era” of the business in the late 1970s, are also behind the sudden appearance of female youth from the former Soviet bloc, in the adult-video business since the turn of the century.

During the Cold War, a joke was told that went something like this: “What do you call a pretty girl in Warsaw [or Prague, Budapest, Moscow or any other eastern-European capital]?” The answer: “A tourist.”

|

| "Tourists" all. www.theapricity.com |

In the West back then, people were so naive so as to construe the homeliness of Slavic women as a congenital quality, not a byproduct of the malnutrition and general scarcity of the Communist system.

It was only a few years after the fall of command socialism, that Westerners understood that female Slavs (now well-fed and infused with hope) were more beautiful, very often, than the women of the liberal democracies.

Russians, Czechs, Poles and so on, very often embody that traditional fair-haired and blue-eyed look of the Nordic ideal. After centuries of invasion from the far east, many also have an Asiatic physiognomy that many Occidental men find so alluring.

While modern Slavic women have beauty, what they don't have is very much selling power in the labour marketplace. In this, they are in a position similar to young North American women of thirty and more years ago. In order to thrive, it seems, not very many, but enough of these young women from eastern Europe have opted to sell their bodies, virtually.